A deep dive into Real User Monitoring

In today’s digital landscape, understanding how users interact with applications is essential to improving performance and enhancing user experience. For organizations using SAP systems, Real User Monitoring within SAP Cloud ALM for Operations provides powerful insights into the behavior, performance, and usage patterns of end-users, whether they are accessing applications in the cloud or on-premise.

This tool is particularly valuable for IT and business users seeking to optimize application performance and boost user satisfaction.

It allows organizations to track and analyze user requests within managed SAP environments, where it monitors and records user interactions, capturing data on performance, response times, and overall application usage. By gathering this information, Real User Monitoring offers a window into the user’s experience, showing how frequently applications are accessed and how responsive they are during use.

Let’s explore the application and examine the features it offers.

Overview

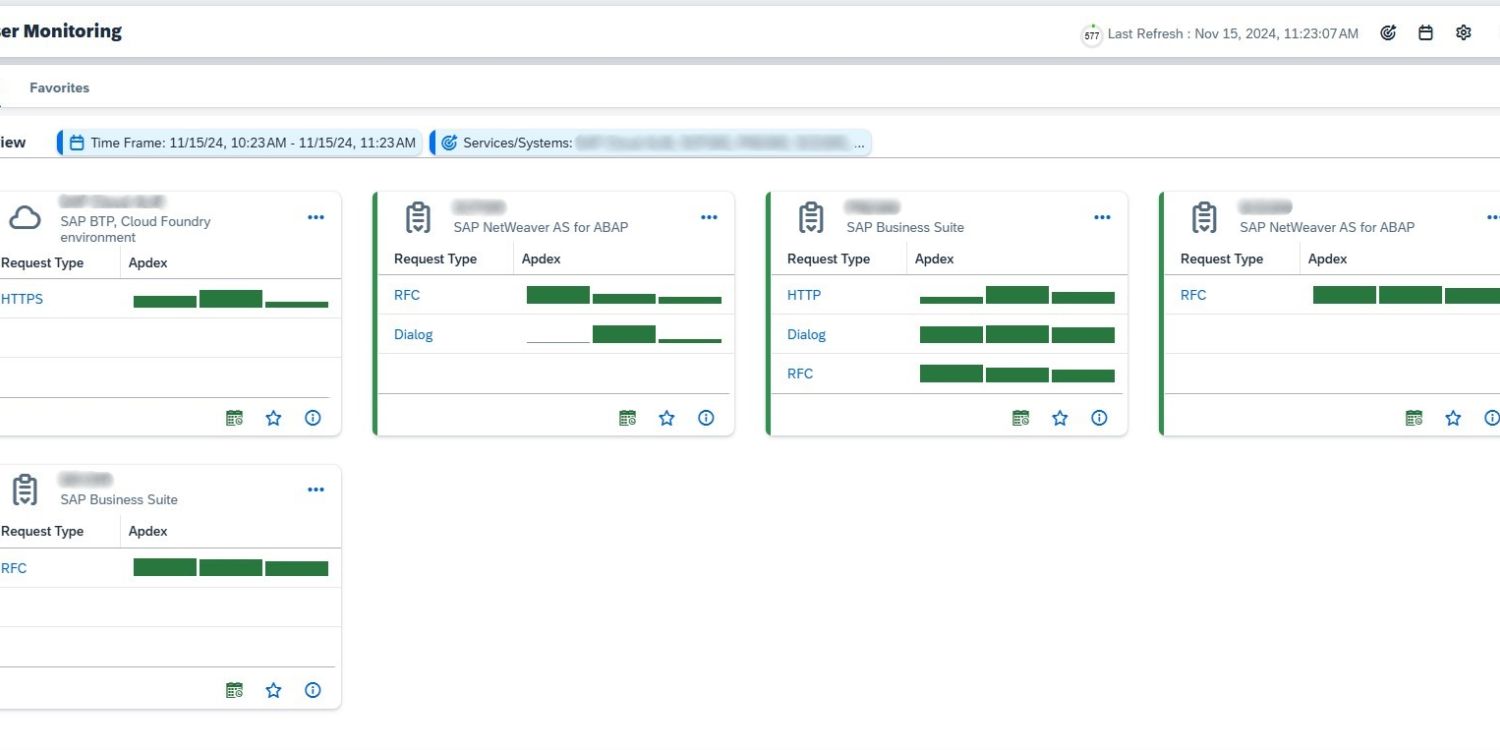

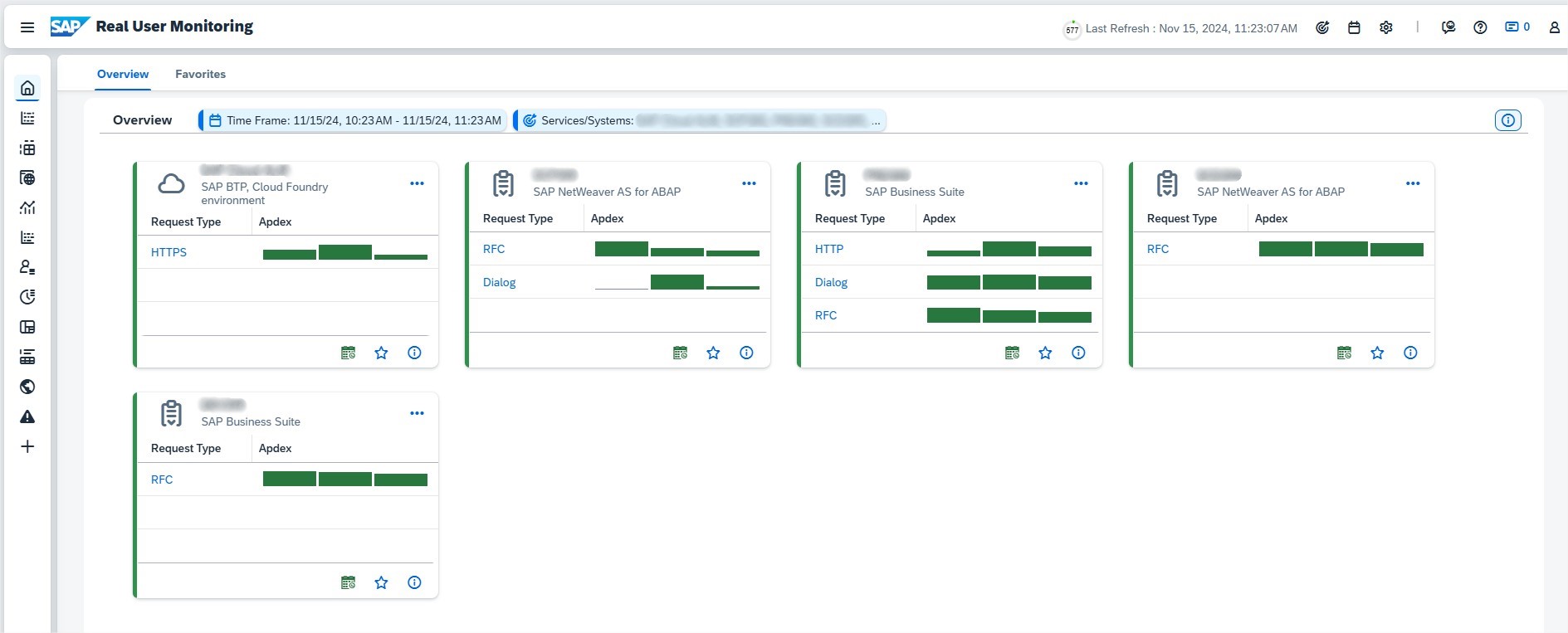

In the overview section of Real User Monitoring, you’ll find a clear visualization of the services selected within the defined scope, ranked by decreasing criticality. The data displayed is aligned with the chosen time frame, providing a focused snapshot of performance trends.

Each tile highlights the evolution of the Application Performance Index (Apdex) over time for the three most critical request types of a single service, with the service name and type prominently displayed in the tile header.

The bars within each request type provide additional insights: their size represents the number of executions, while their color indicates the Apdex rating. Hovering over a bar reveals a tooltip with detailed information, including the start time, Apdex value, and execution count.

On the left side of the tile, a visual indicator reflects the average service rating based on the displayed request types, providing an at-a-glance assessment of overall service performance.

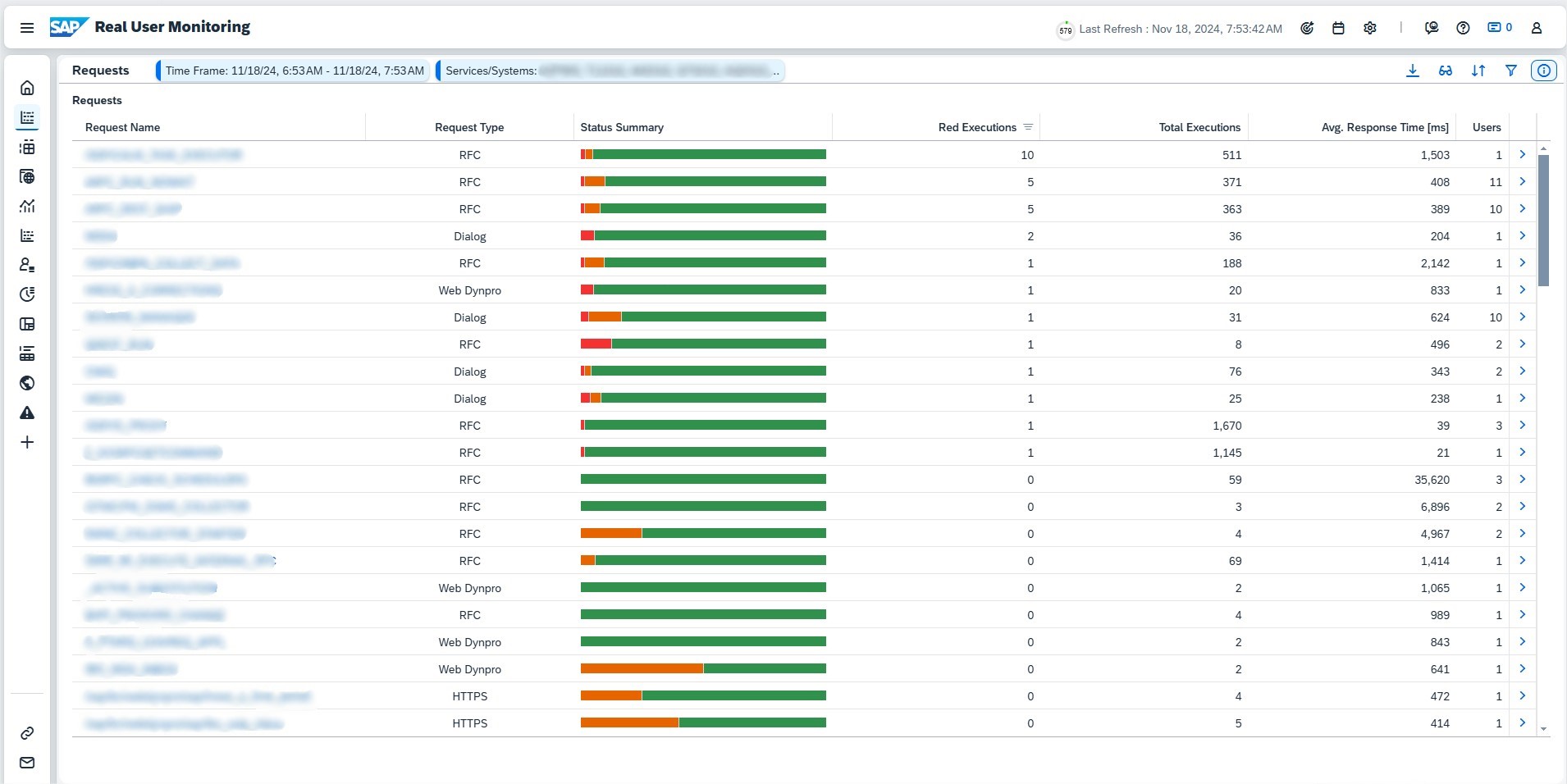

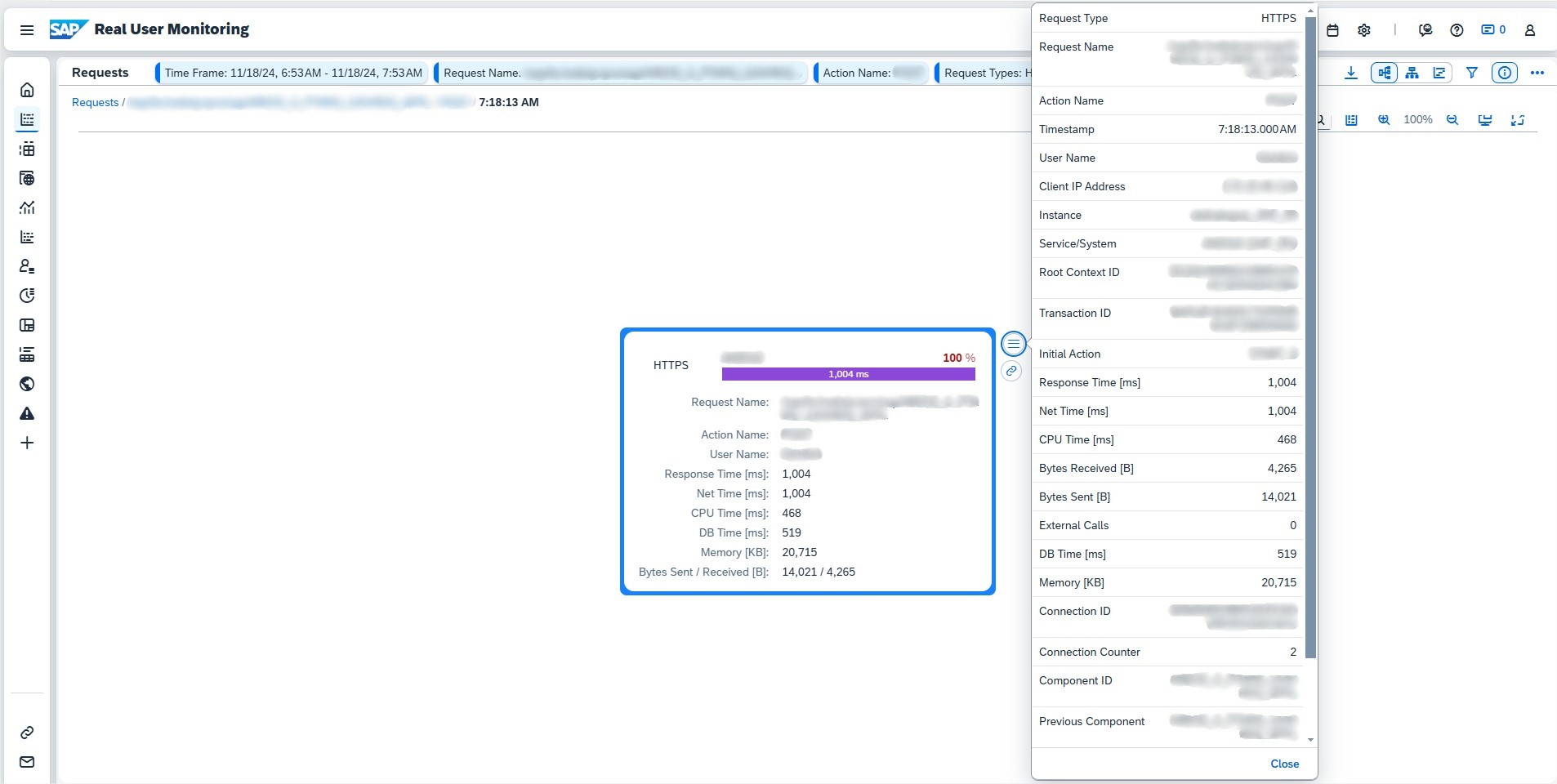

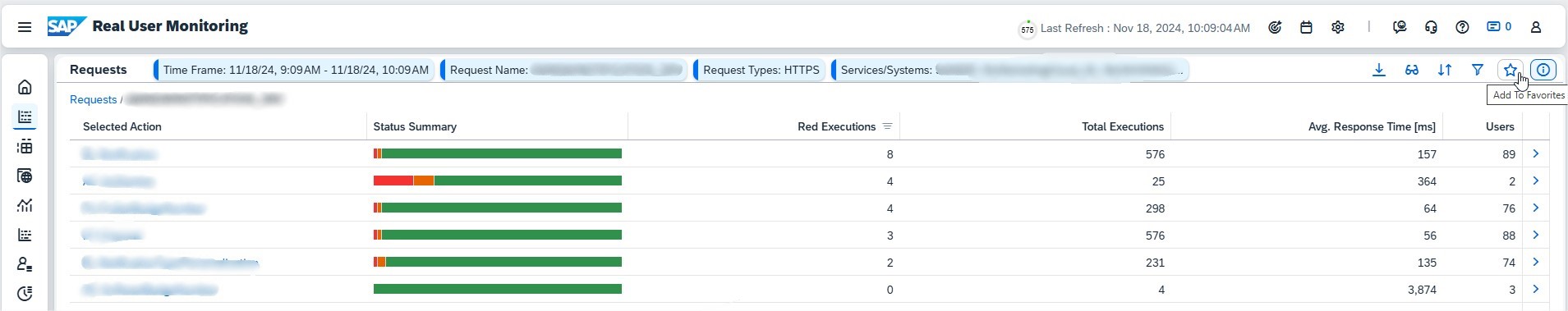

Requests

For each request, you can easily view key details, including its name and type, status (Critical, Warning, or OK), execution frequency, average response time, and the number of associated users. By default, the list is sorted by the number of critical executions (marked in red), ensuring the most urgent issues are prioritized.

Clicking the Details icon beside a request opens three deeper levels of insights:

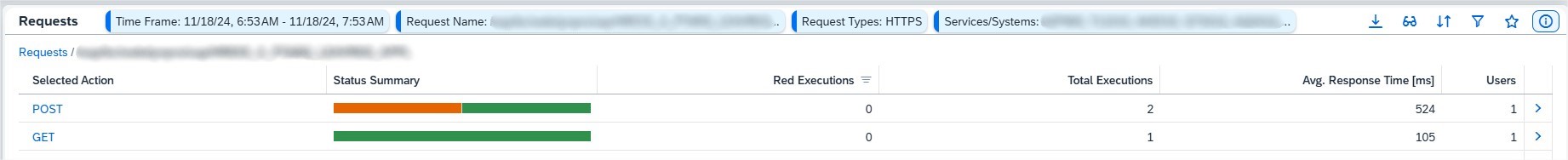

- Request Actions: Actions are categorized based on the request type, such as HTTP(S) methods like GET or POST, SAPUI5 actions triggered by UI elements, Web Dynpro events, Web GUI interactions, or RFC function groups.

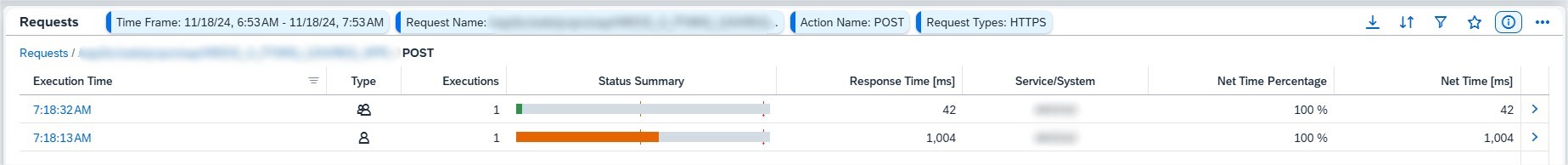

- Execution Analysis: View execution patterns during the selected timeframe. A low net time for critical rows indicates the issue may lie outside the current service, requiring further investigation.

- Execution Details: This level provides a granular look at a single execution, including correlated requests from other components. You can also choose from different visualizations to tailor the analysis to your needs.

The request status color is tied to response times:

- Critical (Red): Response time exceeds the median by at least twice the standard deviation.

- Warning (Yellow): Response time exceeds the median by at least one standard deviation.

These detailed insights help identify performance bottlenecks and drive focused troubleshooting efforts.

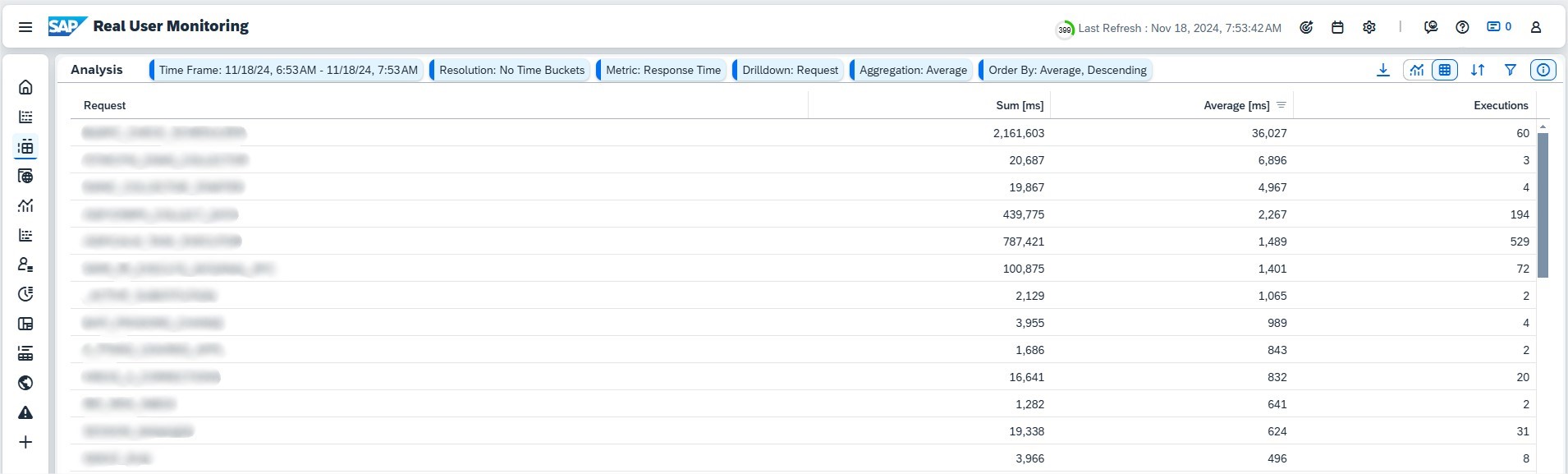

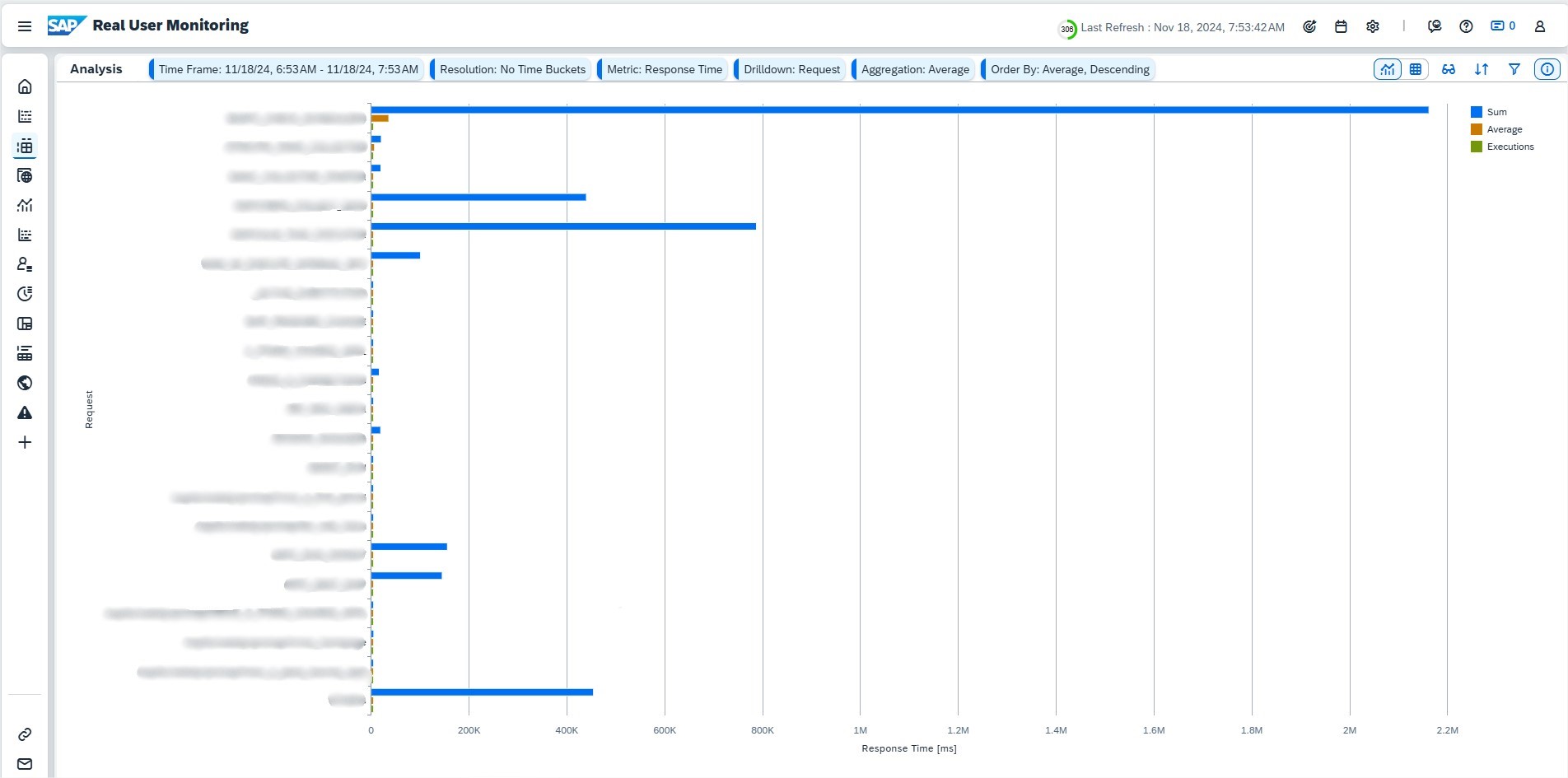

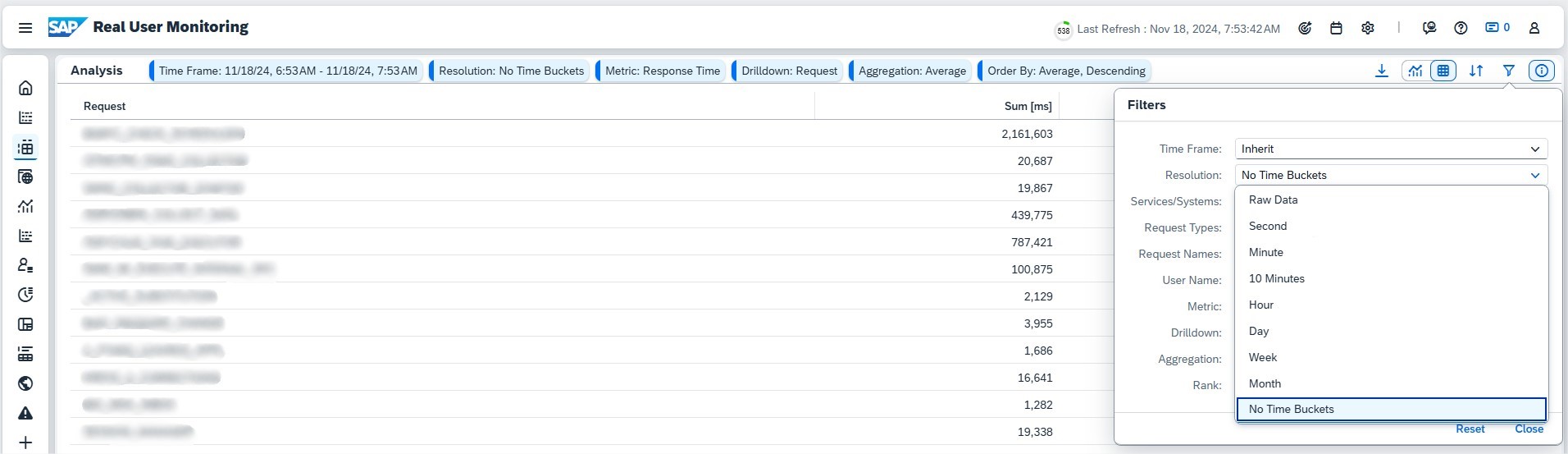

Analysis

The Analysis page provides powerful tools to break down request metrics across various dimensions, offering a wide range of customizable analysis options. You can fine-tune the display settings using the Filter popover to focus on the data most relevant to your needs.

The Analysis page supports two primary display formats:

- Table View

- Use the Drilldown control to select dimensions displayed as columns, reorder them via drag-and-drop, and sort using the Sort option.

- Choose a single metric—Sum, Average, or Count—to focus your analysis.

- Ideal for detailed, tabular comparisons across dimensions.

- Chart View

- Best for visualizing trends over time.

- Select a Resolution and Time Frame to generate line charts showing metric development.

- For non-temporal analysis, set Resolution to “No Time Buckets” to create horizontal bar charts.

The Drilldown control lets you activate and arrange dimensions, which define table columns or chart categories. Metrics calculations include:

- Sum: The total of all request values.

- Average: The sum divided by the count.

- Count: The total number of requests.

For time-specific breakdowns, choose a time resolution in Resolution.

The Analysis page delivers a flexible, dimension-based view of request metrics, making it easy to uncover trends and actionable insights tailored to your operational needs.

Front End

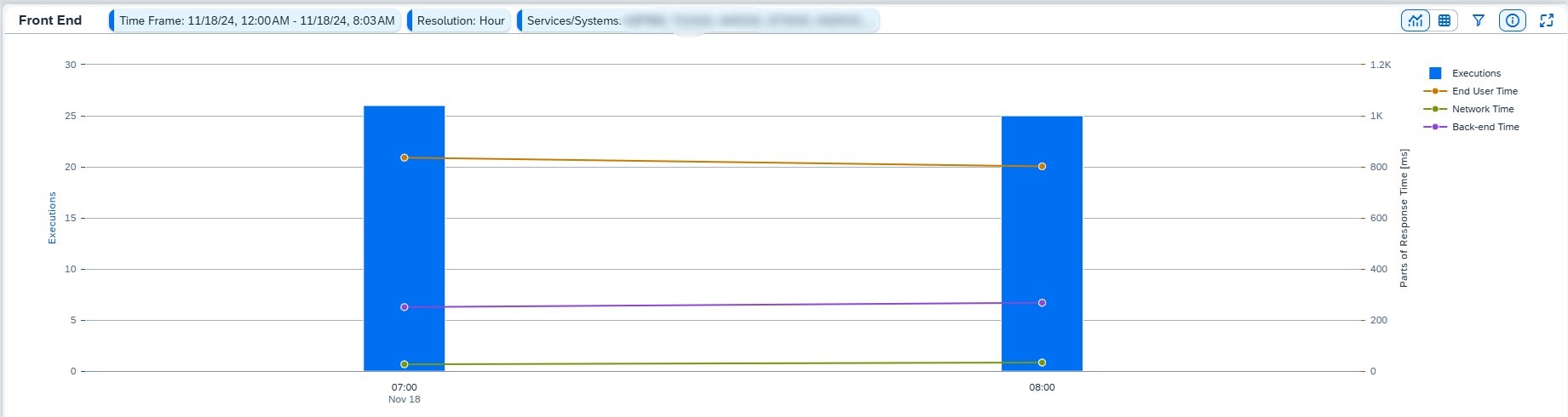

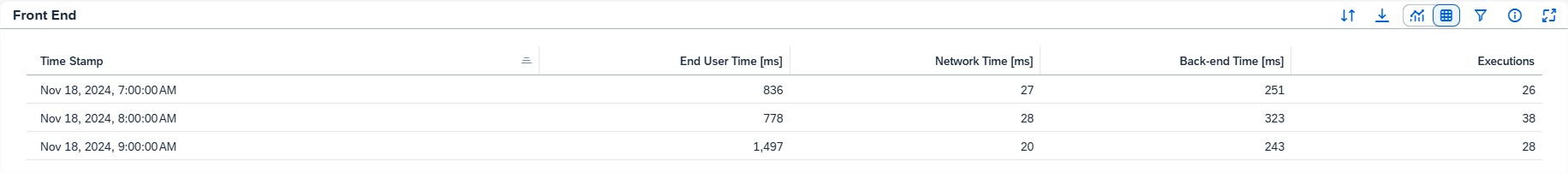

The Front End page offers key usage and performance metrics for front-end request types like SAPUI5, Web Dynpro, and Web GUI. This section provides a detailed view of how applications perform from the end-user perspective, helping you identify and address potential bottlenecks.

The Front End section includes the following metrics:

- Executions: Total number of requests executed within the selected period.

- End User Time: Response time experienced by the user.

- Network Time: Time spent in network roundtrips between the front end and server.

- Back-End Time: Processing time on the server.

You can visualize these metrics as a Chart or a Table, adjusting the display using the Filter option to select specific requests, time frames, and resolution levels.

By default, metrics are shown for the current week with an hourly resolution, independent of global time frame settings. To align this page with global settings, select Inherit in the Filter popover.

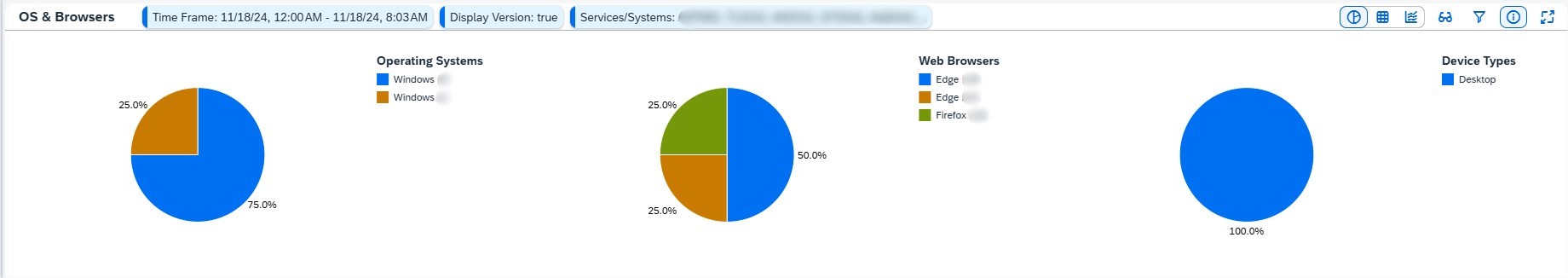

The OS & Browsers section provides an overview of operating systems, browsers, and devices used by end-users, along with their respective user counts. Depending on the Display Version setting in the filter:

- User counts are shown by browser and OS types (e.g., Windows, Chrome).

- Alternatively, they are broken down by specific versions (e.g., Windows 10).

Clicking on a segment in the pie charts reveals individual users for a selected OS or browser. User data is anonymized if the viewer lacks the Real User Analyst Sensitive role, ensuring sensitive information remains protected.

The Front End page delivers actionable insights into user behavior and performance, enabling proactive measures to enhance the overall experience.

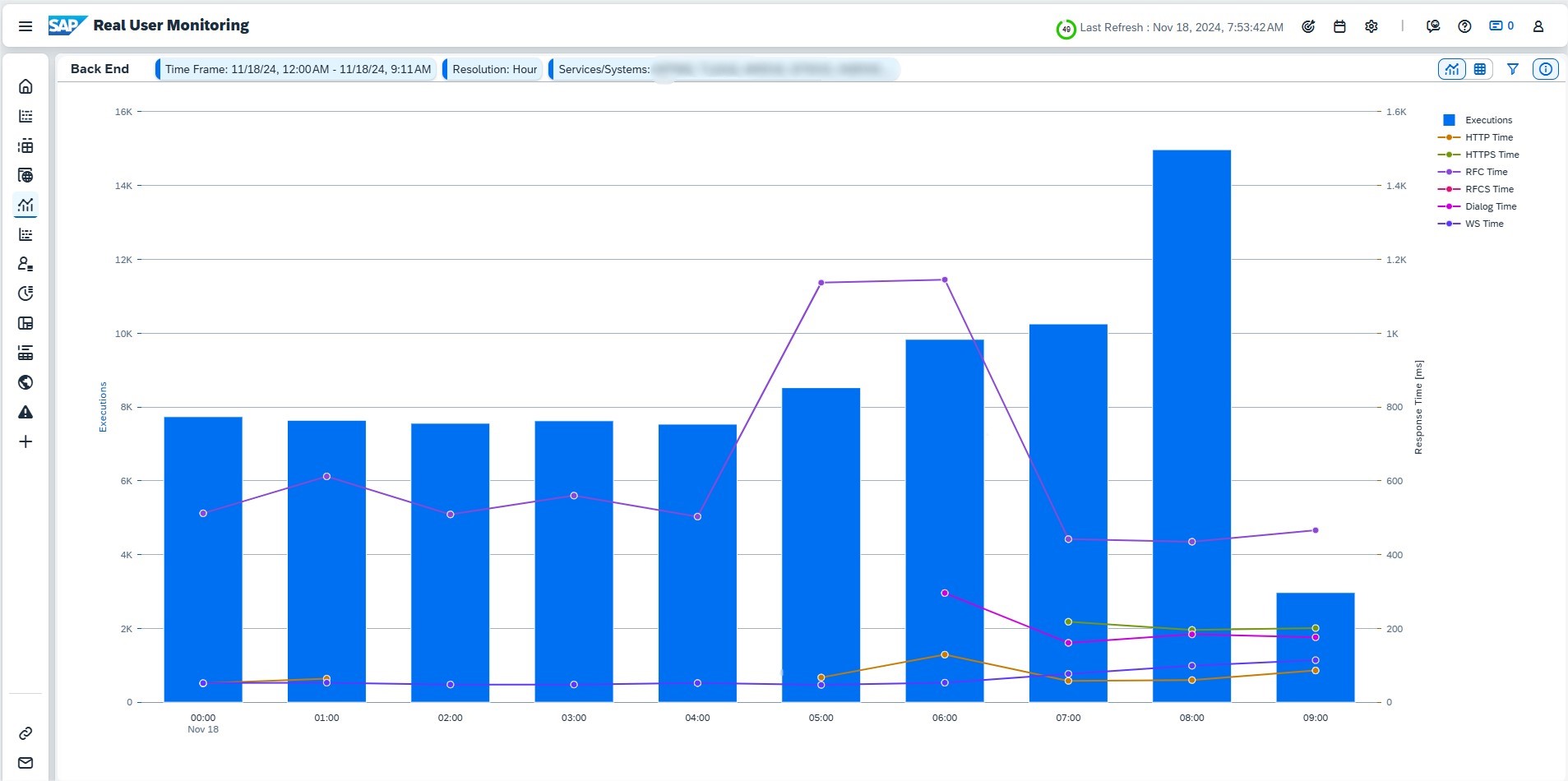

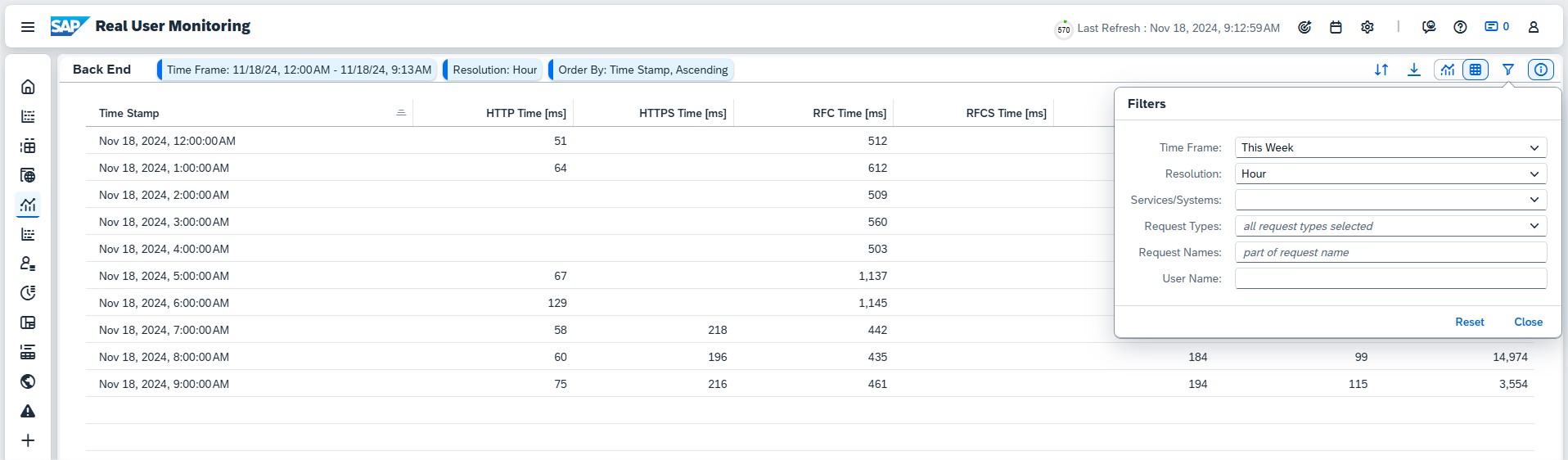

Back End

The Back End page provides an essential overview of performance and usage metrics for back-end requests, helping you monitor and optimize system performance.

By default, the page shows response times and execution counts for these back-end request types:

- HTTP

- HTTPS

- RFC

- RFCS

- Dialog

Metrics can be displayed as a Chart or Table, and the Filter option allows you to refine the view by selecting specific requests, time frames, and resolutions. If you have the Real User Analyst Sensitive role, you can also filter data by specific users for deeper insights.

By default, metrics from the current week are displayed with an hourly resolution. This setting is independent of the global time frame. To align with global settings, choose Inherit in the Filter popup.

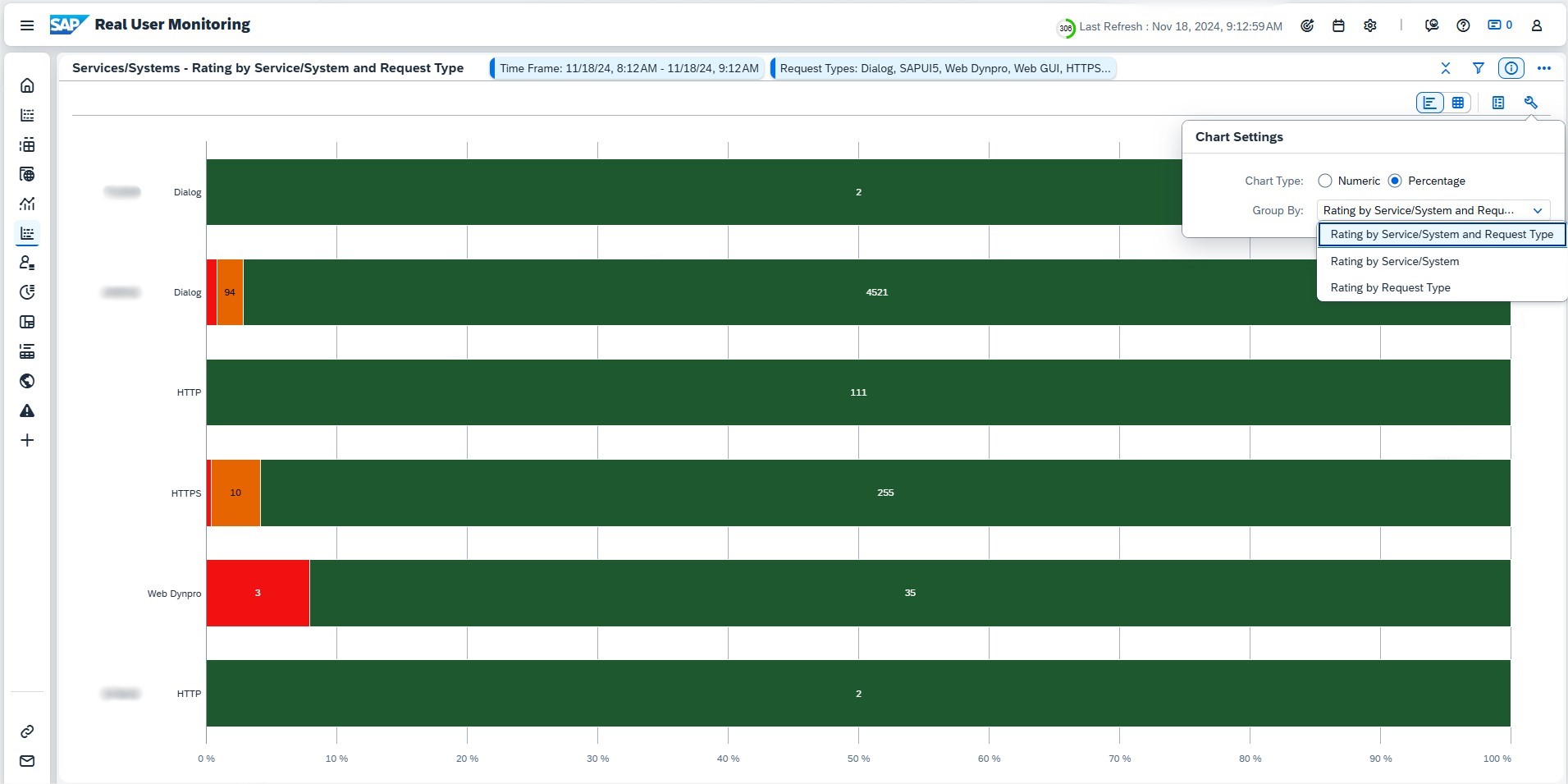

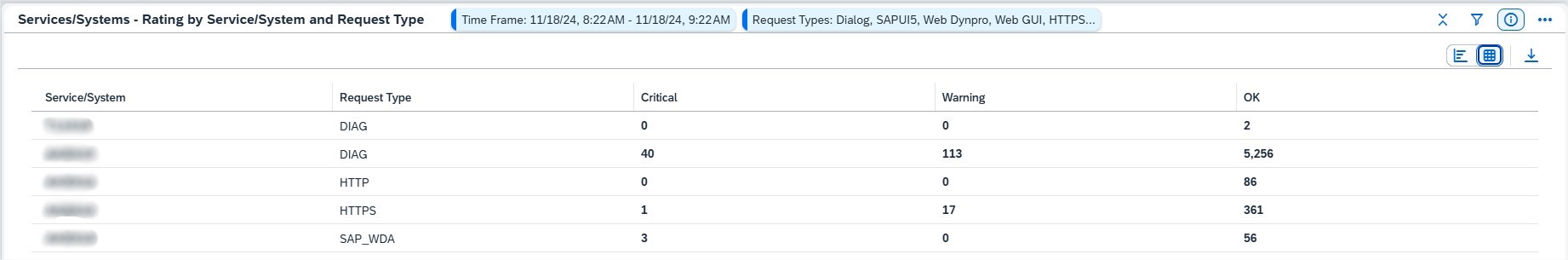

Services/Systems

The Services/Systems page provides an overview of request performance grouped by services and systems, making it easy to identify entities with poor performance or high request volumes.

Key Features:

- View ratings for each service/system and request type.

- Identify how many requests are executed for a particular request type and determine which service handles the highest volume.

- If one entity dominates, you can display values as percentages by expanding the toolbar and selecting Chart Settings.

The status color of requests reflects their response times:

- Critical (Red): Response time exceeds the median by at least twice the standard deviation.

- Warning (Yellow): Response time exceeds the median by at least one standard deviation.

The Services/Systems page helps you pinpoint performance issues and better understand how services handle request loads, enabling targeted improvements.

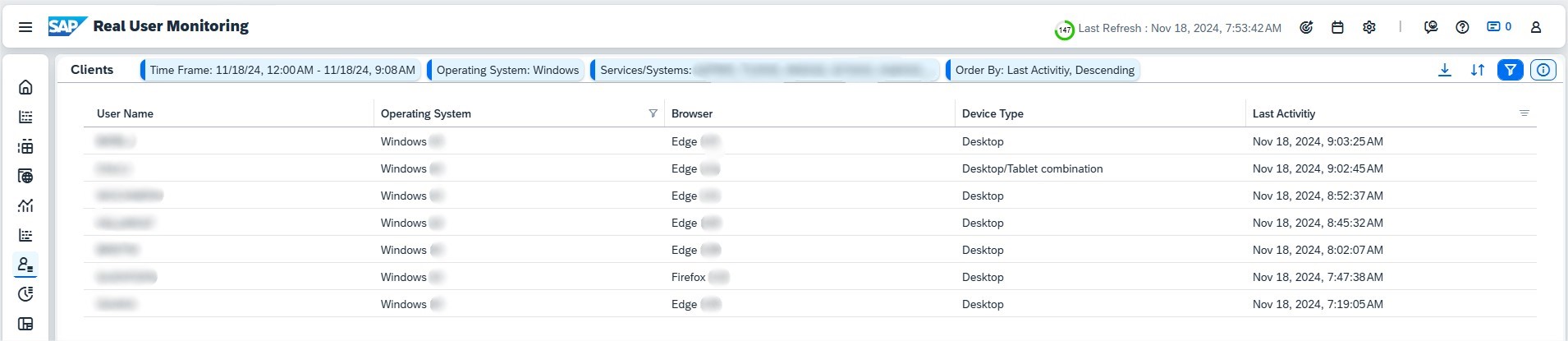

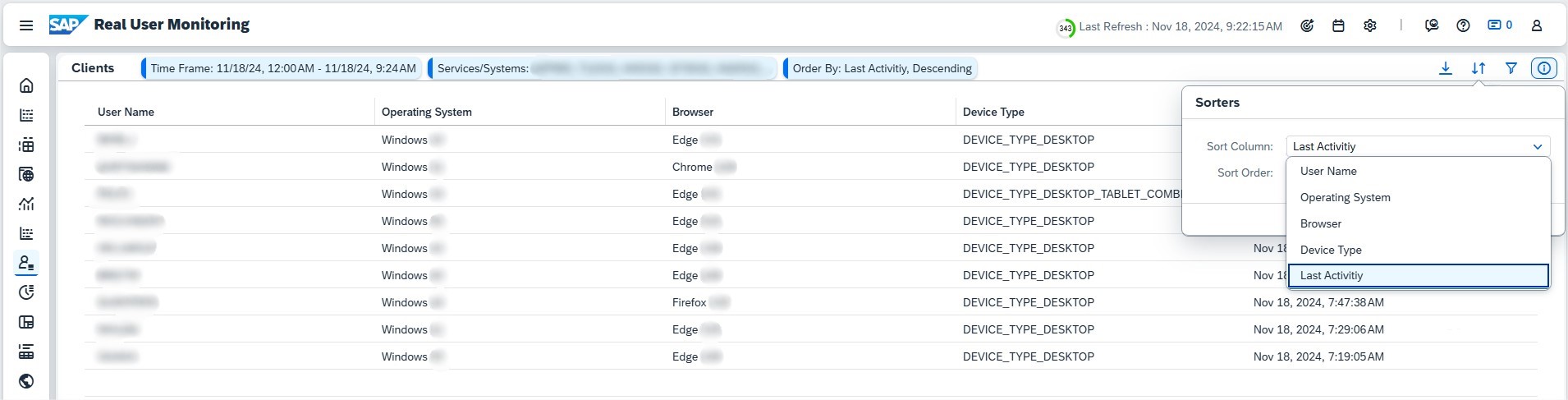

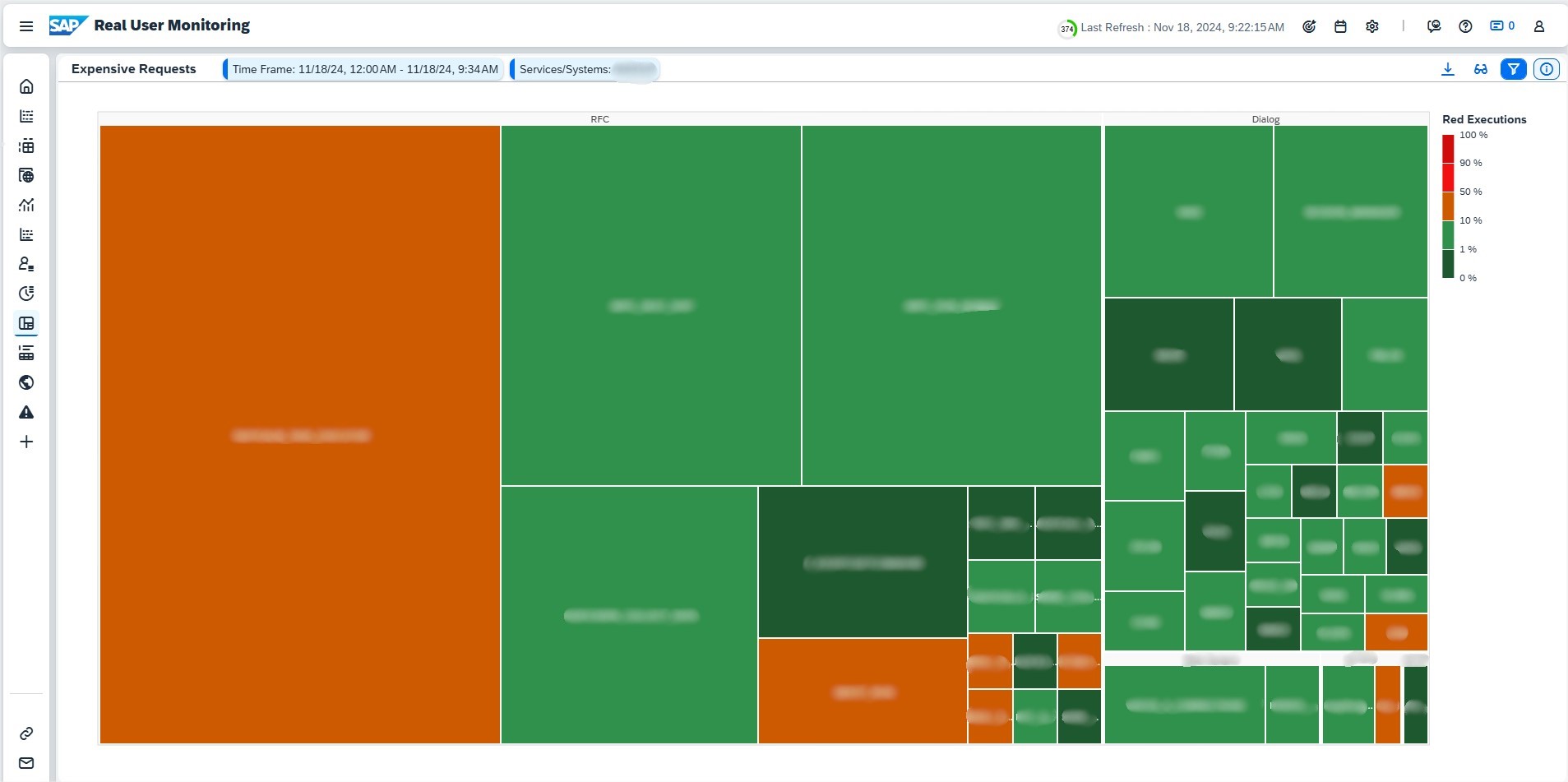

Clients

The Clients page provides detailed information about the operating systems, browsers, and device types used by users for the following front-end request types:

- SAPUI5

- Web Dynpro

- Web GUI

Key Features

- Gain insights into the technologies your users rely on, categorized by OS, browser, and device type.

- For an overview of user counts, refer to the OS & Browsers section on the Front End page.

- If you lack the Real User Analyst Sensitive role, user names are anonymized to ensure data privacy.

Filtering Options

- Use the general Filter to refine data by operating system, browser, and device type (e.g., Windows or Chrome).

- For version-specific filtering (e.g., Windows 10), use the filter option in the corresponding table column.

The Clients page offers valuable insights into user environments, helping you understand usage patterns and optimize for a diverse range of devices and platforms.

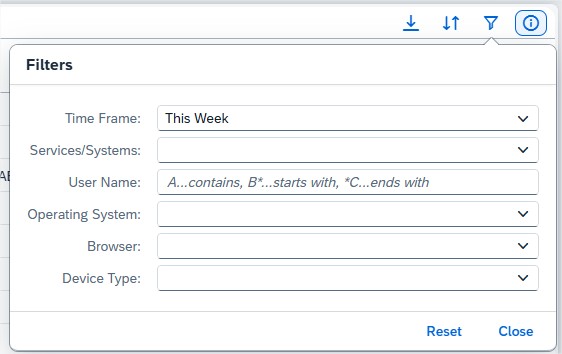

Execution Flow

The Execution Flow page offers a chronological view of user actions and corresponding system responses, enabling you to analyze usage patterns and pinpoint potential system issues.

By default, no data is displayed. To begin, provide a valid User Name or Root Context ID in the Filter:

- The Root Context ID identifies a session, remaining consistent even when requests are sent to different servers, such as when launching an app from the SAP Fiori Launchpad.

- If you lack the Real User Analyst Sensitive role, only the Root Context ID can be used, and user names will not be visible.

Once results are populated, activities with backend responses exceeding 200ms are detailed. Key columns include:

- App/UI Component: Front-end application used.

- User Interaction: Technical name of the user’s action.

- UI Response Time [ms]: Time taken for the UI to respond.

- Request Name/Backend Component: Server request triggered.

- Backend Action: Operation performed on the server.

- Response Time [ms]: Server processing time.

- Net Time [ms]: Component’s gross processing time, excluding outgoing requests.

- Time (based on Server): Timestamp of the action.

You can click on any component or action to navigate directly to the Requests page, applying relevant filter settings for a focused drilldown.

The Execution Flow page provides a comprehensive overview of user activities and backend processes, helping you track actions, assess performance, and investigate issues in real-time.

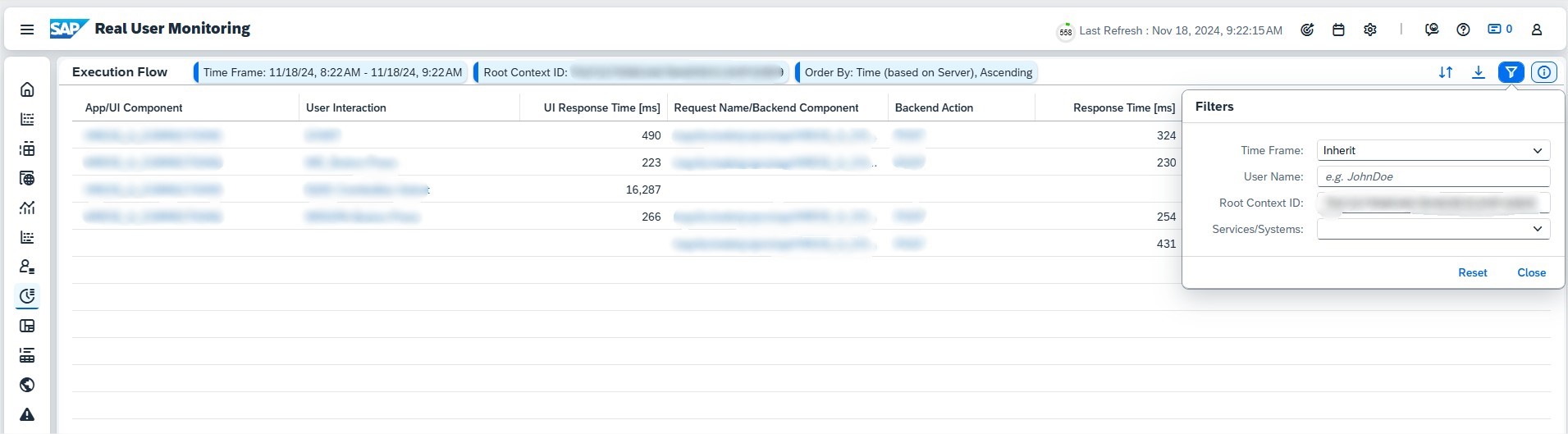

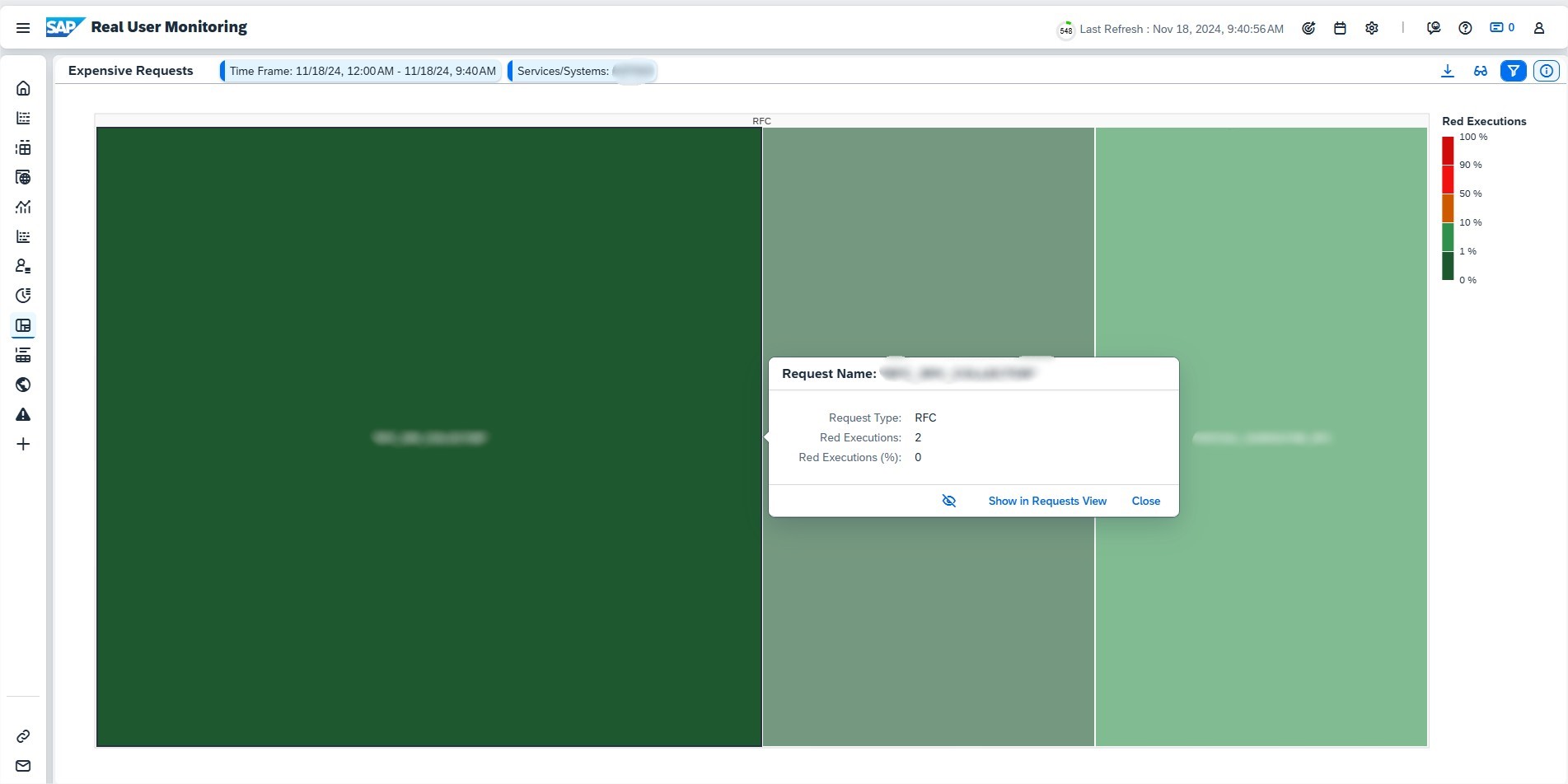

Expensive Requests

The Expensive Requests page highlights the most resource-intensive and critical request names across your services and systems, enabling you to identify potential bottlenecks and optimize performance.

Key Features

- Displays up to 200 request names by default, ranked by resource consumption or criticality. You can adjust this limit in the Filters under the Top field.

- Results appear as a tree map with squares representing request names, grouped by request types.

Tree Map Details

- Square Size: Depends on the selected Display Mode.

- Square Color: Reflects the percentage of “red” (critical) executions. A request is “red” if its response time is at least 12 times the median response time for its type. Color thresholds are shown in the legend.

Choose from three views in Display Mode:

- Performance (Default): Highlights request names with the most red executions.

- Workload: Focuses on requests with the highest total response time, calculated as the product of execution count and average response time.

- Usage: Shows request names with the highest number of unique calls, representing the breadth of user activity.

Click on any square to display the corresponding request name in the Request Overview. Also, you can click Hide to hide single dominating request names to have a better overview of the other request names. Hidden request names are displayed in the Filters with an exclamation point (!) as the prefix.

The Expensive Requests page offers a clear visual representation of resource-heavy requests, helping you prioritize optimizations and improve overall system efficiency.

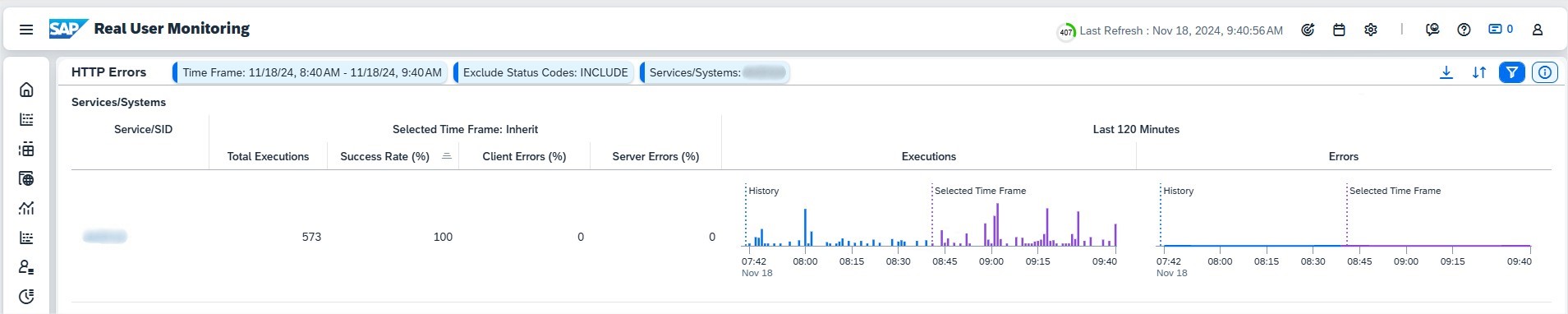

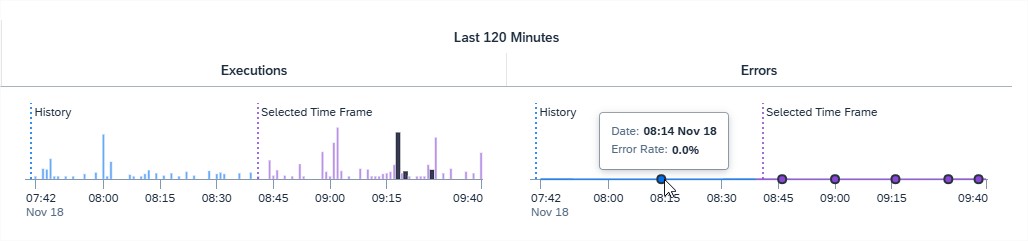

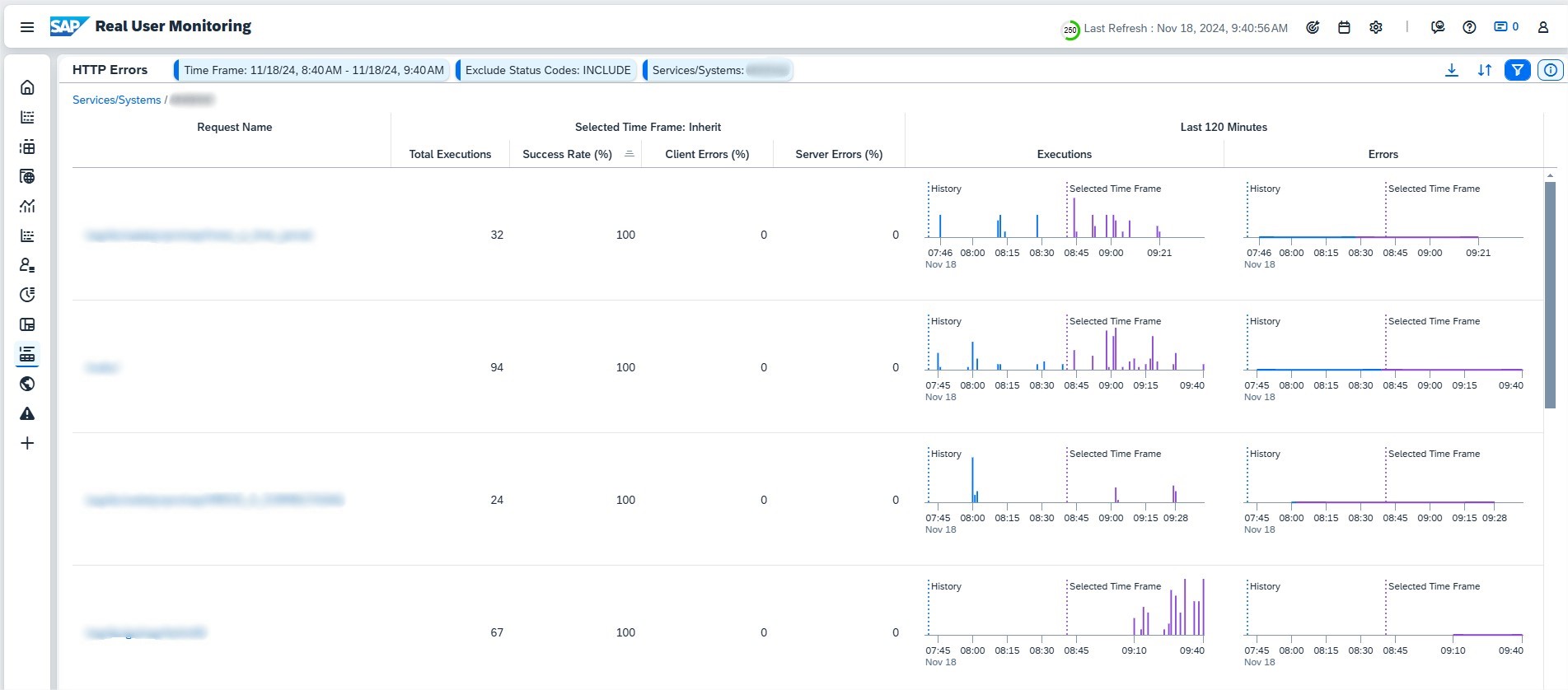

HTTP Errors

The HTTP Errors page provides insights into HTTP(S) request errors across systems and services, enabling quick identification of performance issues.

For each system or service within the selected time frame, the page shows:

- Number of Executions: Total HTTP(S) requests executed.

- Success Rate (%): Percentage of successful calls.

- Client Errors (4xx): Percentage of calls with 4xx status codes.

- Server Errors (5xx): Percentage of calls with 5xx status codes.

The History section visualizes the trend of HTTP(S) calls and errors over time. An extended period before the selected time frame is included in the charts for context, with the selected time frame highlighted in purple.

Click on a system or service in the table to view detailed data for the corresponding request names within the selected system or service.

The HTTP Errors page equips you with actionable insights to monitor error trends, troubleshoot issues, and ensure high system reliability.

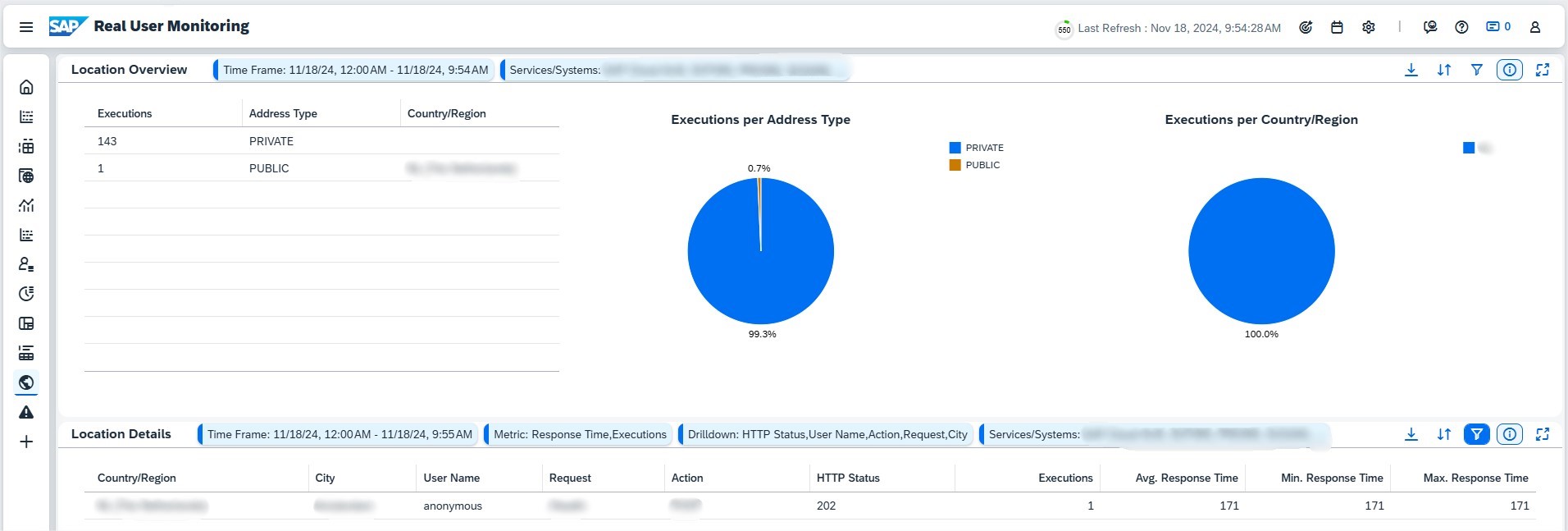

Geolocation

The Geolocation page allows you to analyze where HTTP(S) requests are originating from, offering insights into the geographical distribution of traffic for systems and services in scope.

Key Features

- For public cloud services, the caller’s IP address is passed through to the application via X-Forwarded-For, making it possible to assign the IP address to a location. Note that users may alter this information using VPN tools.

- Private cloud services and on-premise systems depend on the network infrastructure configuration to provide location data.

The Location Overview displays the number of requests grouped by country/region and IP address type, including:

- PUBLIC: IP addresses passed through and assigned a location.

- PRIVATE: No location data available for these IP ranges.

- UNKNOWN: IP addresses that cannot be resolved to a location.

Drill down into specific location data by selecting a country/region from the overview or using the Filter to define a metric. You can explore the following criteria for deeper analysis:

- City

- Request Name

- Action (HTTP method)

- User Name

- HTTP Status (status code)

The Geolocation page helps you visualize the global distribution of user activity, identify regional performance issues, and understand traffic patterns across different locations.

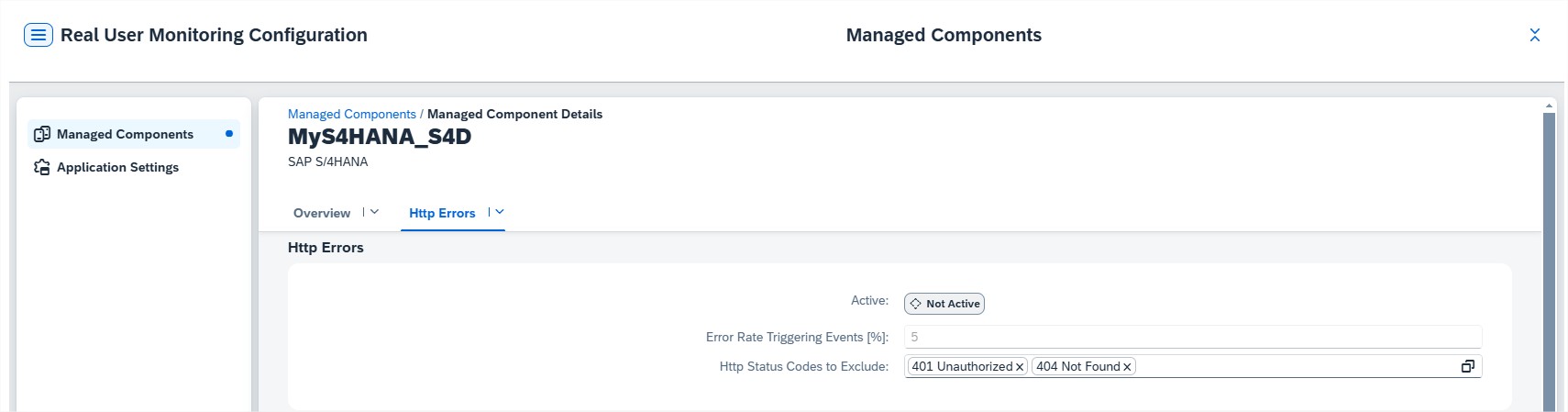

Alerting

The Alerting page provides an overview of all activated alerts, helping you monitor critical system events and take necessary actions.

Key Features

- Alert Types: Currently, HTTP Errors are the primary alerts displayed.

- Configuration: Alerts are activated and configured in the Configuration section of the corresponding managed component.

Actions You Can Take

- Sort Alerts: Sort alerts by Alert Name, Message, Status, Processor, and Object Details.

- Assign/Remove Processors: Manage who is responsible for handling alerts.

- Confirm Alerts: Acknowledge and confirm open alerts.

- View Action Logs: Review the logs associated with each alert for a detailed history.

- Export: Export the list of alerts to a spreadsheet for further analysis.

The Alerting page ensures you can stay on top of critical issues, resolve them efficiently, and maintain system reliability through proactive alert management.

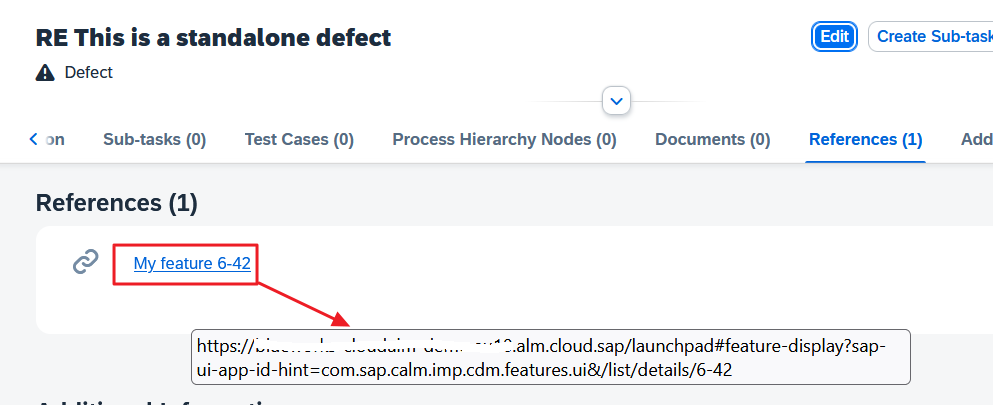

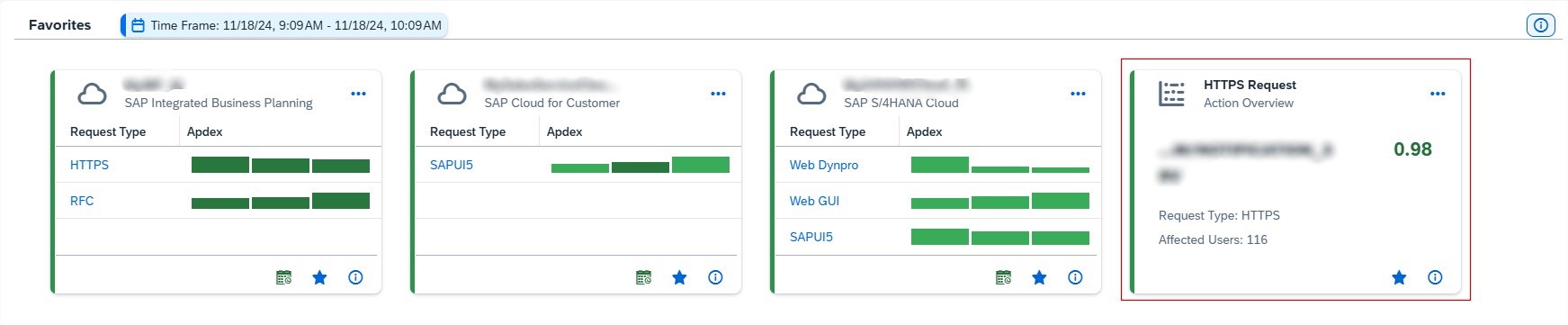

Tip: How to create meaningful Favorites to Overview

For example, to display on Overview page only certain Requests, you can open a request and Save it as Favorite.

Now when going to Home page we can see the newly created favorite:

Conclusion

With Real User Monitoring organizations gain transparency into user interactions, response times, and system performance. This tool not only enhances IT teams’ ability to resolve issues efficiently but also empowers business teams with valuable insights into user behavior. As a result, Real User Monitoring helps organizations provide a seamless, optimized user experience, enhancing both operational efficiency and end-user satisfaction.